NVIDIA Grace CPU Superchip

NVIDIA Grace CPU Superchip

The hardware powering every Isambard 3 node

GW4 Isambard 3 Practical Workshop — 21 April 2026

Two Grace CPUs in one module

What “Superchip” means

A Grace CPU Superchip packages two NVIDIA Grace CPUs on a single compact module.

Cores

- 144 Arm Neoverse V2 cores across the Superchip

- 72 cores per Grace CPU

The interconnect

- The two CPUs are linked by NVLink-C2C (Chip-to-Chip)

- 900 GB/s bidirectional bandwidth between them — far faster than PCIe

This tight coupling is why the Superchip behaves more like a single processor than a conventional dual-socket server.

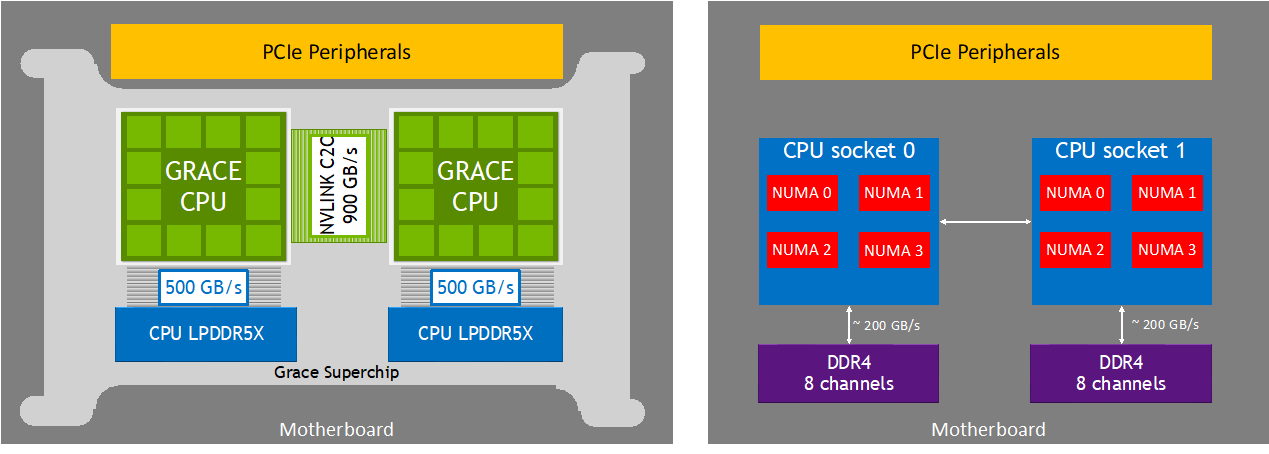

NUMA topology: simpler than a conventional server

Two NUMA nodes, one Superchip

Within each Grace CPU, cores, cache, memory, and I/O are connected by the NVIDIA Scalable Coherency Fabric (SCF) — a high-bandwidth mesh.

- One Grace CPU = one NUMA node

- One Superchip = two NUMA nodes total

- Cross-NUMA traffic travels the 900 GB/s NVLink-C2C link

Conventional dual-socket servers may expose four or more NUMA nodes with slow inter-socket interconnects. Grace is notably simpler.

Practical rule: treat each node as two NUMA zones, each with 72 cores and ~120 GB of memory.

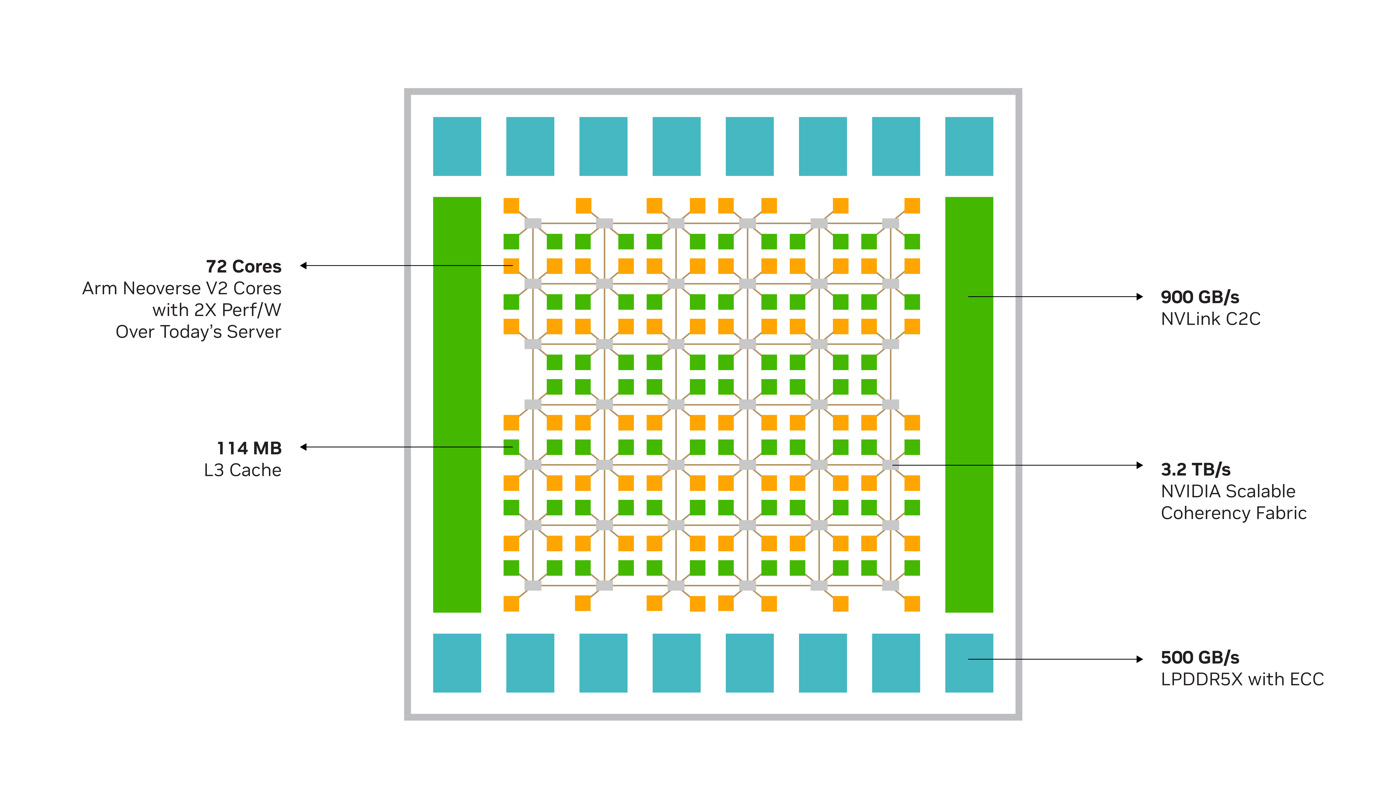

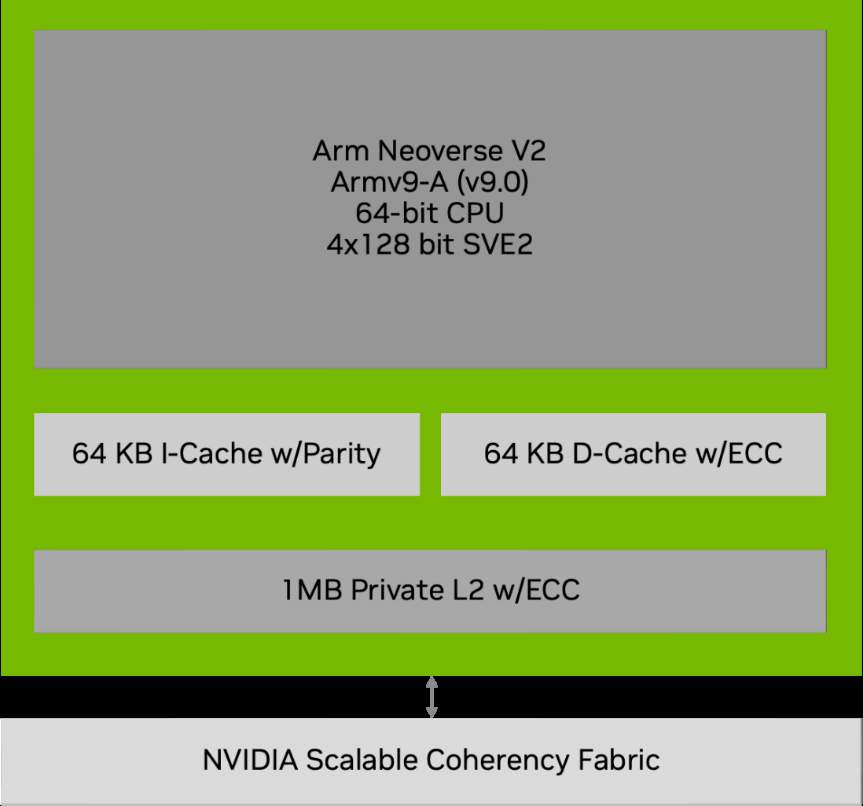

Inside a single Grace CPU die

72 cores, a mesh fabric, and co-packaged LPDDR5X

Compute

72 × Arm Neoverse V2 cores

Cache hierarchy

- 64 KB L1 I-cache + 64 KB L1 D-cache per core

- 1 MB L2 cache per core (private)

- 114 MB distributed L3 cache shared across all 72 cores

Internal fabric

3.2 TB/s NVIDIA Scalable Coherency Fabric connecting cores, L3, memory, and I/O

Off-die link

900 GB/s NVLink-C2C to the second Grace CPU

Memory: LPDDR5X co-packaged for bandwidth

240 GB at up to 1 TB/s

Grace uses LPDDR5X with ECC, physically co-packaged with the CPU dies on the same module.

Capacity

240 GB total on this Superchip, split as 2 × 120 GB — one 120 GB NUMA node per Grace CPU.

Bandwidth

| Scope | Peak bandwidth |

|---|---|

| Per Grace CPU | up to 512 GB/s |

| Per Grace CPU Superchip | up to 1 TB/s |

Why it matters

Co-packaging eliminates the off-module interconnect bottleneck. The result is unusually high bandwidth for a CPU platform — competitive with some HBM-equipped accelerators.

Memory-bandwidth-sensitive codes (FFTs, sparse solvers, molecular dynamics) often benefit the most from Grace.

Vectorization: SVE2 and NEON

Four 128-bit SIMD units per core

Each Neoverse V2 core contains four 128-bit SIMD units supporting two instruction sets.

NEON

Fixed 128-bit width; the standard Arm SIMD set. Widely supported across compilers and libraries.

SVE2 (Scalable Vector Extension 2)

Armv9-A feature; also runs at 128 bits on V2, but written length-agnostically so it can target future wider implementations without recompilation.

Compiling for best performance

Use -mcpu=neoverse-v2 with the GNU

compiler (the recommended path on Isambard 3):

- GCC:

-mcpu=neoverse-v2 - Via Cray wrappers (

cc,CC,ftn): add-mcpu=neoverse-v2to your flags

-mcpu sets both the architecture target and the tuning

in one flag — it is the correct flag for Arm, unlike -march

which is the x86 convention.

Peak FP64 performance: 7.1 TFLOPS

Back-of-the-envelope from first principles

Per core, per cycle — FP64:

\[\text{FLOPS/cycle per core} = (\text{elements per vector}) \times (\text{vector units}) \times (\text{ops per FMA})\]

\[\text{FLOPS/cycle per core} = 2 \times 4 \times 2 = 16\]

Scaling to the full Superchip at 3.1 GHz base frequency:

\[\text{Total FP64 Peak} = 144 \times 3.1 \times 10^{9} \times 16 \approx 7.1 \text{ TFLOPS}\]

NVIDIA’s published figure is 7.1 TFLOPS FP64 peak, consistent with the 3.1 GHz base frequency. At the 3.0 GHz all-core SIMD frequency the same calculation gives ≈ 6.9 TFLOPS — the difference is simply which frequency NVIDIA chose to publish.

Grace CPU Superchip: key numbers

What to remember when planning your jobs

144 Arm Neoverse V2 cores per node

2 NUMA nodes per node (72 cores + 120 GB each)

240 GB LPDDR5X memory per node, with ECC

1 TB/s peak memory bandwidth per node

900 GB/s NVLink-C2C between the two CPUs

7.1 TFLOPS FP64 peak per node

Each node in Isambard 3 is one Grace CPU Superchip. Across 384 nodes: 55,296 cores and ~92 TB of total memory.